Home » CHI 2018

Category Archives: CHI 2018

Paper length in the reformulated Papers track

Headlines

- There are no Notes at CHI 2018. Papers with a length proportional to their contribution were invited in the CfP.

- There are no Note-length papers in the programme this year – the shortest papers have five pages of content.

- Only 7% of accepted submissions have 7 or fewer content pages.

- Papers with 10 pages of content make-up 81% of all accepted submissions.

- Looking at rejected submissions, it looks like longer submissions are more likely to be accepted.

- Length somewhat predicts scores. Scores predict acceptance. Length somewhat predicts acceptance.

The Papers track at CHI

CHI Notes, short submissions with a four-page limit, were introduced at CHI 2006. In a twist of fate, CHI 2006 took place in Montréal and with CHI 2018, which is also in Montréal, CHI Notes are no longer part of the programme. The last 12 CHIs have had Papers and Notes side by side from submission through to presentation. Papers had a 10 page limit. Notes had a 4 page limit. (Latterly, these limits have excluded references.) The reviewing process for Notes and Papers was the same. They were published in the same format. Notes, however, often fared badly in terms of relative acceptance rates. Table 1 shows the relative acceptance rates for Notes and Papers at past CHIs. It has been more challenging to get notes accepted at CHI.

Table 1. Historical acceptance rates of Papers and Notes

| Year | Papers acceptance rate | Notes acceptance rate |

| 2016 | 27.3% | 14.5% |

| 2015 | 24.8% | 17.8% |

| 2014 | 26.7% | 13.6% |

| 2013 | 23.5% | 12.3% |

Furthermore, the rates of Paper and Note submissions have diverged over recent CHIs. The number of submissions of Papers has increased substantially. Notes, already submitted in fewer numbers have not kept up.

Finally, Notes and Papers were given different lengths of talk slots at previous CHI conferences. Having to accommodate two different durations of talks complicated the planning process for the sessions at the conference.

With all of this in mind, the decision was taken to drop Notes from the conference, and instead accept papers of any length up to the limit of ten pages to the Papers track.

Paper lengths – accepted submissions

Have Notes ‘lived on’ in the new Papers track? Are we still getting those kind of short papers? First we will consider accepted papers. When authors of accepted submissions were preparing their final submissions, we asked them to record how many pages of content were in their manuscript. This allows us to augment our data from the number of pages in the submitted PDF, which also includes references.

The mean number of content pages was 9.6 (SD=1.14). The mean number of pages, including references, was 12.1 (SD=1.8). On average, papers came with 2.6 pages of references (SD=1.1). The shortest submissions were 4.5 pages (2). So many of the submissions (512, 77%) report 10 pages of content that a histogram is not informative. These data are tabularized instead, rounded to the nearest whole page (e.g., 4.5 pages and 5 pages are both rounded to five whole pages).

Table 2. Author indicated content length of accepted submissions

| Number of content pages | Frequency | % of all accepted submissions |

| 5 | 10 | 2% |

| 6 | 18 | 3% |

| 7 | 20 | 3% |

| 8 | 26 | 4% |

| 9 | 51 | 8% |

| 10 | 541 | 81% |

These data seem show that there are no Note-length papers being presented at CHI this year. Shorter papers (5-7 pages of content) account for only 7% of all of the papers being presented at the conference. Does this reflect reviewer’s historical preference for longer papers, or has the loss of the Note format encouraged people to submit generally longer papers?

Paper lengths – rejected submissions

Is there a preference for longer papers, or do acceptances simply reflect the underlying distribution of submissions? We do not have the number of content pages for rejected papers, because this information was not solicited from authors. However, from the accepted submissions we know that, on average, 79% of the total length of accepted submissions is made up of content. We can use this to estimate the number of content pages in the rejected submissions (NB – rejects only, not withdrawn, quick reject papers etc) made to the papers track from the length of the PDFs submitted. This yields Table 3.

Table 3. Estimated content length of rejected submissions

| Estimated number of content pages | Frequency | % of all rejected submissions |

| 4 | 62 | 3% |

| 5 | 211 | 11% |

| 6 | 116 | 6% |

| 7 | 165 | 9% |

| 8 | 403 | 21% |

| 9 | 241 | 13% |

| 10 | 677 | 36% |

Recall that these are only estimates. Nevertheless, these estimates seem to suggest a much more even distribution of paper content lengths amongst the rejected submissions. The accepted papers seem to be disproportionately 10 pages in length, compared to rejected papers.

Relationship between score and length

Are longer papers more likely to be accepted, then? We looked at the relationship between the average score a paper received and its total (i.e., including references) length.

Figure 1. Relationship between mean submission score and submission length in pages

This can be inferred from what has already been reported, but higher scores imply acceptance and longer submissions imply higher scores.

It seems clear that CHI reviewers want to see a CHI-sized contribution to get them tending toward accepting submissions. Whether this preference reflects a preference for longer papers or a preference for the more substantial contribution that longer papers should be able to make is not something that we can assess using the data at our disposal, but we might speculate.

It might be that the kind of disciplines that CHI encompasses generally don’t produce outputs that are well suited to low page limits (consider, e.g., work reporting the results of an interview study). It might also be that experienced researchers perceive full-length papers to have more cachet (e.g., in the context of tenure or national research assessments) and so focus their efforts on full-length papers. It might also be the case that shorter submissions represent work that has been quickly –and therefore not fully– developed. These submissions might be of lower quality. All of these factors might play a role in the relationship between length and acceptance, amongst many others. We can’t be sure of the answer, but perhaps all of these things are worth considering when you come to submit your CHI 2019 papers in September.

Anna Cox and Mark Perry

Technical Programme Chairs, ACM CHI 2018

Sandy Gould

Analytics Chair, ACM CHI 2018

CHI 2018 – Looking at the big picture

The gargantuan effort of soliciting, submitting and assessing submissions at CHI is over for the 2018 programme. (The process for 2019 has already started!) In this blog post we look across all of the tracks to look at CHI’s big picture. How many submissions did we get? How many were accepted? For whatever reasons you might want to know these statistics – comparisons across different years, assessment of your own success rates against track averages, comparison to other conferences, or something else – we have tried to be as open as possible here about the conference data for the broad set of submissions and reviews.

Overall submissions

CHI 2018 solicited submissions to 14 tracks. Across all of these tracks we had a total of 3955. The conference is, unsurprisingly, dominated by the Papers track (66% of all submission to the conference). Late Breaking Work is also a significant contributor (16%). The rest of the tracks combined account for 18% of the submissions to the conference.

| Track | Submissions | Accept | Rate |

| Papers | 2590 | 666 | 25.7% |

| LBW | 642 | 256 | 39.9% |

| Demonstrations | 130 | 77 | 59.2% |

| Workshops and Symposia | 108 | 43 | 39.8% |

| Student Design Competition | 79 | 12 | 15.2% |

| Video showcase | 69 | 15 | 21.7% |

| Doctoral Consortium | 68 | 20 | 29.4% |

| Case studies | 64 | 21 | 32.8% |

| alt.chi | 61 | 16 | 26.2% |

| Student Research Competition | 43 | 24 | 55.8% |

| Courses | 38 | 25 | 65.8% |

| Art | 24 | 14 | 58% |

| SIGs | 23 | 19 | 82.6% |

| Panels | 16 | 8 | 50% |

Table 1: Submissions and acceptance rate by track.

A complete listing of all tracks, sorted by submission rate is provided in Table 1. Note that some of these numbers vary slightly from data you may have seen previously: this is because some submissions may have been removed from the submission system (for example, if they were withdrawn).

The Student Design Competition had the lowest acceptance rate (15.2%). SIGs were the most likely to be accepted (82.6%). Of course, the overall acceptance rate is skewed by the fact that we have so many submissions to the Papers track. The average unweighted acceptance rate (i.e., the mean of the individual track acceptance rates) is 43%.

Thank you everyone who submitted their work to CHI 2018! High quality submissions are the lifeblood of any conference.

Overall external reviews

Of course, all 3955 submissions had to have some kind of review, whether through the Paper track’s full peer-review, or through a slightly more lightweight approach. Here we consider just the external reviewers in the process – we’ll consider committee members in the next section.

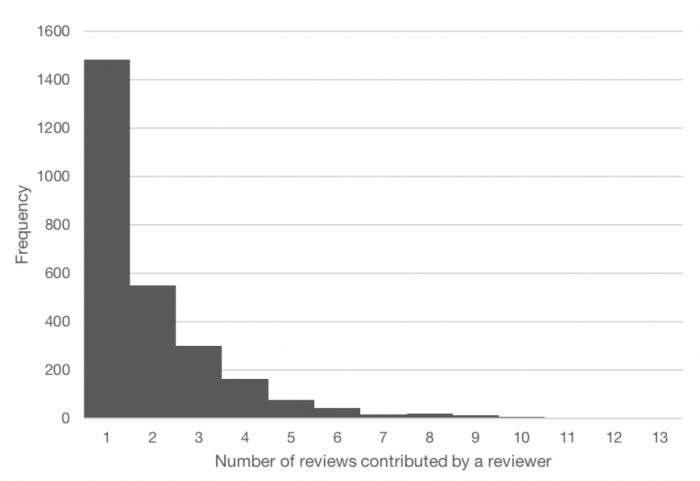

Figure 1: Number of reviews contributed by each reviewer in the Papers track. This is a plot of the data from a previous blog post.

The conference defines ‘Refereed’ content as that which is rigorously reviewed by members of the program committee and peer experts. The process includes an opportunity for authors to respond to referees’ critiques. Submitters can expect to receive formal feedback from reviewers, and the program committee may ask authors for specific changes as a condition of publication. Reviewers are also involved in ‘Juried’ content (Late Breaking Work, Workshops/Symposia, Case Studies, alt.chi, Student Design and Research Competitions). Note that some tracks do not have external reviewers because they are ‘Curated’ (Panels, Courses, Doctoral Consortium, EXPO, Special Interest Group (SIG) meetings, Video Showcase), and while these tracks are highly selective, they do not usually follow a committee-based reviewing process. Fuller details on these processes can be found in Selection Processes on the CHI website.

In total, submissions to the tracks that made use of external reviewers generated 1.93 external reviews per submission. The Papers track attracted the lion’s share of reviews (73.9%), averaging 2.01 external reviews per submission. Outside the Papers and Late Breaking Work tracks, fewer external reviewers (516) were involved in the process (7.5%), and averaging 1.65. reviews per submission. Although 3837 non-committee reviewers are listed here across all tracks, we have not collated the contributions of individual reviewers across tracks, so we cannot yet say how many unique CHI external reviewers we had this year across all tracks.

Table 2 contains information for each track on the number of reviewers for a track and the total number of reviews that they produced. Note once again that these breakdowns are based on final-end-of process data and may vary very slightly from what has been reported previously (e.g., due to reviewers being added, or incomplete reviews being removed from the system).

| Track | Reviewers | Reviews |

| Papers | 2648 | 5061 |

| LBW | 874 | 1267 |

| Workshops and Symposia | 131 | 219 |

| alt.chi | 100 | 138 |

| Case studies | 40 | 83 |

| Student Design Competition | 44 | 76 |

Table 2: External reviewers and number of reviews by track

Thank you everyone who provided an external review for CHI 2018. People know that when they see work at CHI, it has been rigorously reviewed by experts. Without your reviews we wouldn’t be able to put together a programme of this quality.

Overall committee reviews

Submissions need reviewers. Committee members help to find them. Once reviewers give their opinion, someone has to synthesise them to come to a decision. Committee members do this too.

Between them CHI 2018 committee members wrote 6,321 reviews, meta-reviews or opinions. This is not far off the total number of external reviews – committee members work extremely hard in their roles. Once again, the Papers track generates the vast majority of the work (83.8%) for the committee in terms of reviews or meta-reviews written.

| Track | Committee members | Committee/Meta reviews |

| Papers | 319 | 5295 |

| LBW | 112 | 664 |

| Workshops and Symposia | 28 | 108 |

| Student Design Competition | 3 | 73 |

| alt.chi | 12 | 61 |

| Case studies | 8 | 61 |

| Video showcase | 7 | 59 |

Table 3: Committee members and number of reviews by track (NB – includes only PC members with meta-reviewing responsibility and not, for instance, track chairs).

Table 3 shows the contributions of committee members to the different tracks. Note that, again, these figures may not match up precisely because of changes over time (or, for instance, data not appearing in the correct place, a switching of 1AC and 2AC roles during the process, for instance). Also note that not all tracks have a process that involves committee members writing formal reviews.

The CHI 2018 committee members have worked meticulously, often on a large number of submissions, to make sure that the right decisions are made for the the conference programme. We thank all of them for their efforts.

Summary

As you can see, the CHI process generates huge volumes of work – for those working on committees, and as reviewers, as well as for authors, and while we have tried to minimise the effort for everyone involved, the review process requires vast amounts of time to ensure its sustained quality of outputs and topical relevance. Here we have only quantified the number of submissions and the amount of work that these submissions generate for reviewers and committee members. Of course, not all the tracks at CHI generate work that can be measured using the metrics we have used in this post, nor is this a complete account of thousands of hours of organizational work and planning that also has to take place for all of these parts to come together. We can only run a conference of this scale and quality with the dedicated effort of the researchers and practitioners that offer their time, energy, and expertise. The ACM CHI conference stands at the forefront of its discipline, but its pillars are supported by the community it serves. We thank those who have stepped up to do their bit, and encourage the next generation of researchers emerging to help take on these demanding but rewarding roles. The future of the conference lies in your hands!

Anna Cox and Mark Perry

Technical Programme Chairs, ACM CHI 2018

Sandy Gould

Analytics Chair, ACM CHI 2018

Post-Program Committee analyses, Part 1: What’s been (conditionally) accepted?

This is the first of a series of blog-posts about the outcome of the CHI 2018 Program Committee meeting and elements of the wider submission-handling process. In this short post, we focus on the conditional acceptance and rejection of submissions to the Papers track.

Future posts will consider, amongst other things, the role of rebuttals in conditional acceptance, the effect of virtual and physical subcommittees on conditional acceptance and year-on-year reviewer turnover. We hope these analyses will give you more insight into how the process works.

…….The TPC team

Headlines

- 667 papers were conditionally accepted, for a conditional acceptance rate of 25.7%. This is close to the average 2012-16 acceptance rate for Papers (not including Notes) of 25.1%.

- The mean of mean scores for accepted papers was 3.73.

- The lowest scoring paper with a conditional accept had an average score of 2.25. (So there’s always hope!)

- Only two papers with a mean score of 3.5 were rejected. (Above average scores are not a guarantee of conditional acceptance.)

Overall distribution of scores

The mean of mean scores across all papers was 2.56, which was unchanged from the average prior to the rebuttal period. (A full analysis of rebuttals will follow in a separate blog post.) The distribution is bimodal, with a relatively small number of means at or closely around 3.0.

Apart from submissions that were quick or desk rejected, each manuscript received least four reviews: two external and two internal. In total, 10391 individual scores were given to papers. The modal score was 2.0, and 49% of reviews gave a submission a score of 2.0 or 2.5. Just under 16% of review scores were 4.0 or greater.

Table of final score frequency for all reviews

| Review score | Frequency |

|---|---|

| 1 | 495 |

| 1.5 | 1161 |

| 2 | 2659 |

| 2.5 | 2434 |

| 3 | 739 |

| 3.5 | 1277 |

| 4 | 1115 |

| 4.5 | 381 |

| 5 | 130 |

Conditionally accepted papers

The 667 conditionally accepted papers ranged in their average score from 2.25 to 5 (M=3.73). Twenty-one papers (3% of conditionally accepted submissions and 0.8% of all submissions) have been conditionally accepted with an average score of less than 3. After rebuttals, three papers had a mean score of 5. There were 213 submissions (11% of all submissions, 32% of conditional accepts) with a mean score of 4 or more.

Each paper had several reviews. The score of a given review usually varies from the average score for a paper. Therefore, a standard deviation of scores for each submission can be calculated. The mean of these standard deviations for conditionally accepted papers was 0.46, so scores on conditionally accepted papers were generally within a half-point of the mean of a paper.

Rejected papers

Rejected papers had a mean of mean score of 2.2. 1267 rejected submissions had an average between 2 and 3. That’s 49% of all submissions and fully 66% of all rejected papers.

The mean of the standard deviations of scores for each paper was 0.28. This implies less variance in the scores for each rejected submission than in conditionally accepted submissions. This might be an artefact of scale compression; if a reviewer is positive about a paper they might give it between a 3.5 and a 5.0. If they don’t, they might give it a 2.5, a 2.0 or a 1.5. Only 12 papers that were rejected (not quick or desk rejected) with a mean score of 1.0. Only 85 papers averaged less than 1.5. Indeed, as we saw earlier, 49% of all scores given to submissions were a 2.0 or a 2.5.

The middle

As you can see from the histogram, a mean score between 2.75 and 3.25 is the cross-over point between rejection and conditional acceptance. Only 14 papers with a score of 3.25 or over have been rejected. Only seven papers with a score of less than 2.75 have been conditionally accepted. Predicting outcomes solely on mean score is therefore difficult between 2.75 and 3.25.

There were 345 (13%) papers that scored ≥2.75 and ≤3.25. On average, these papers received 4.28 reviews, versus 4.01 for all papers, so additional reviewers (e.g., a 3AC) were often brought in to bear on these ‘borderline’ papers. Of these 345 papers, 29 had one score (either by an AC or external reviewer) of less than 2.0. Of these, 12 were conditionally accepted and 17 were rejected. This year, significant preparatory work was undertaken to ensure that 3ACs, where requested, were assigned in advance of the PC meeting. This was to ensure sufficient time to thoroughly read papers, reviews, and rebuttals and to produce high-quality reviews.

This year, 1ACs were given a more explicit editorial role than in previous years. 2ACs and potentially 3ACs at the meeting would of course also have had an influence, but what was the effect of the 1AC’s score on the overall decision? Well, 214 of this subset of 345 papers had a 1AC score that was less than 3.0. Of these 214 papers, only 11 were subsequently conditionally accepted. Of the 131 submissions in this subset that scored 3.0 or more, 90 were conditionally accepted. This suggests that in this borderline region between 2.75 and 3.25, having the 1AC on-side and positive is essential for success. But then those of you reading this who have submitted in the past will already know that.

It is also plausible that 1ACs simply uprated their scores to match a positive outcome, but we know from analyses that we have already conducted that this doesn’t seem to be the case. We will explore this in more detail in our next blog post on the effect of rebuttals on outcomes.

Anna Cox and Mark Perry

Technical Programme Chairs, ACM CHI 2018

Sandy Gould

Analytics Chair, ACM CHI 2018

The first part of the review process

This is a brief ‘quick and dirty’ analysis of review data after all reviews were returned. We are aiming to produce more comprehensive analyses of the data in the future, but we wanted to share this information during the rebuttal period in case it is useful to authors in planning their next course of action. We’ve taken every effort to ensure these data are accurate, but given the time pressures, results of the full analysis may vary slightly from these data.

……The TPC team

Headlines

- The number of papers submitted has increased by 8% on last year.

- Average review scores are very similar to those from CHI2016 (we don’t have the analysis for 2017). There’s no evidence that reviewers or ACs are “more grumpy” this year.

- Rebuttals have been shown to increase average scores (2016 data). We expect the same this year and provide links to useful articles about how to write a rebuttal.

- The new process for reviews has reduced the burden on the CHI community by approximately 2100 reviews.

Number of papers submitted to CHI2018

Back in September, 3029 abstracts (and associated metadata) were submitted. By the final cut-off date, 86% of these turned into complete submissions resulting in 2592 papers. This represents a rise of 8% (172 papers) on the previous year.

Number of reviews

Of these 2592:

- 8 were withdrawn

- 38 were ‘Quick Rejected’

- 7 were ‘Desk Rejected’

- 2 were rejected for other reasons

Quick Rejected = papers were read and they were missing something critical that would make replication, analysis or validation of claims impossible.

Desk Rejected = out of scope for chi or some obvious error like over page limit

The 2537 papers remaining received 5105 external reviews. 41 papers received three external reviews. One received four.

Including papers that have already been withdrawn or rejected, reviews were written by 2651 reviewers. The majority (56%) of reviewers completed only one review. One reviewer completed thirteen.

| Number of reviews per reviewer | Number of reviewers | Yield |

|---|---|---|

| 1 | 1484 | 1484 |

| 2 | 550 | 1100 |

| 3 | 299 | 897 |

| 4 | 163 | 656 |

| 5 | 75 | 375 |

| 6 | 43 | 258 |

| 7 | 16 | 112 |

| 8 | 20 | 160 |

| 9 | 12 | 108 |

| 10 | 7 | 70 |

| 11 | 3 | 33 |

| 12 | 2 | 24 |

| 13 | 1 | 13 |

In addition, 314 ACs (including a few SCs) were assigned to a median of 16 papers (on average, 8 as 1AC, 8 as 2AC). ACs wrote full reviews for 8 papers (as 2AC) and meta-reviews for 8 papers (as 1AC).

Review scores

The mean of the mean scores given to papers for the 2018 conference was 2.56 (SD=0.74). (Yeah, we know we took means of ordinal data here, but luckily there is no R2 of this blog post!). In total, 1356 papers (53%) received an average score between 2.0 and 2.9 (inclusive). Only 129 papers have a mean score of 4.0 or greater. There is one paper with an average of 5.0. The CHI 2016 conference (the last year we have data and detailed analysis for) had an unweighted average of all first round reviewer scores of 2.63 (SD=0.73) and the median was 2.625.

The mean of mean expertise (2018) ratings was 3.22 (SD=0.34) on a 4-pt scale. This shows that reviewers have excellent expertise ratings.

This year ACs were instructed to avoid giving papers a score of 3.0. Instead, they were encouraged to give a score of 2.5 or 3.5 in order to give a clear indication to authors as to whether the AC felt they would be able to argue for the paper, in its current state, before the rebuttal. The intent here was that ACs should not be sitting on the fence and avoiding forming a view on the paper. This seems to have been somewhat successful.

Only 33 1AC scores of 3.0 were given (~1%). 2ACs were much more likely to give scores of 3.0 (195, ~8%) when writing their reviews. There are currently 621 papers (~24%) with 1AC score 3+ and 1971 papers with 1AC score <3. We expect these scores to move, as rebuttals are reviewed and discussed.

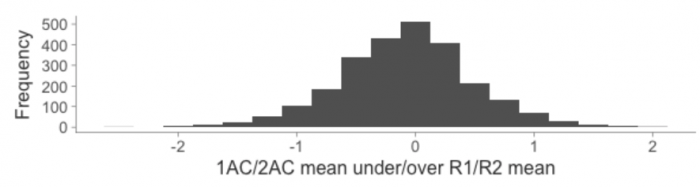

ACs seem to have tracked their reviewers closely in most, but not all, instances. On average, the mean of 1AC and 2AC scores is ~0.09 less than the mean of the R1 and R2 scores.

Writing Rebuttals

We are now in the rebuttal phase. Authors can sometimes wonder whether it’s worth their time to write a rebuttal if their paper has received low scores from reviewers. At this stage, of papers not already rejected, 15.6% of papers have a mean score equal to or greater than 3.5.

- 396 of papers with mean score ≥ 3.5

- 2141 of papers with mean score < 3.5

While we all understand that papers with higher scores are more likely to be accepted, there is a reason we just don’t auto-accept based on scores, but actually give authors the opportunity to write rebuttals and then discuss the papers at a PC meeting. This important part of the process provides an opportunity for authors to respond to some of the reviewers comments and for the committee to select papers.

Two years ago there was an analysis of whether rebuttals change reviewer scores. (Tl:dr They did) We can therefore expect the number of papers with mean scores above 3.0 to increase when we’ve received the rebuttals and reviewers and ACs have responded to them.

We know that CHI is a highly selective conference. Historic acceptance rates demonstrate that somewhere around 24% of papers are ultimately accepted:

- 23.6% (2012-16) Papers+Notes acceptance rate

- 24.5% 2016 Papers+Notes acceptance rate

- 25.0% 2017 Papers+Notes acceptance rate

Remember, this year there are no more notes so it is also interesting to look at the historic acceptance rates JUST FOR PAPERS

- 25.1% (2012-16) average Papers only acceptance rate

- 27.3% 2016 Papers only acceptance rate

So even if your paper has a mean score and a 1AC score below 3.0 there’s every reason to write your rebuttal so that some of the 2.5 1AC scores can move up to 3.5!

A number of members of our community have provided views on how to write a rebuttal:

- Writing rebuttals by Niklas Elmqvist, University of Maryland, College Park

- Writing CHI Rebuttals by Gene Golovchinsky

- SIGCHI Rebuttals: Some Suggestions How to Write Them by Albrecht Schmidt

- A CHI Rebuttal by Simone O’Callaghan

- How to Write SIGCHI Rebuttals by Hyunyoung Song

Anna Cox and Mark Perry

Technical Programme Chairs, ACM CHI 2018

Sandy Gould

Analytics Chair, ACM CHI 2018

Update your reviewing preferences

The ACM CHI Conference on Human Factors in Computing Systems is the premier international conference of Human-Computer Interaction. If you submitted yesterday, thank you for your patience with PCS. We received a record number of submissions. These now need to be peer reviewed by subject experts – and this is why we are reaching out to you. Without the help of thousands of reviewers, we will be unable to ensure that the standard of reviews, and the consequent recognition that research at the event receives, remains as high as it is.

Please take a moment now to update your reviewing preferences and expertise on PCS:

- Please visit https://precisionconference.com/~sigchi/

- Enter your login credentials

- Click on “volunteer center” in the top menu.

- Complete the steps as indicated if you are a ‘first time’ reviewer

- Or: update the sections marked with “Please update this”

Reviewer Advice:

The selections that you make will support how you will be selected for reviewing papers, so it is important that the content here matches your expertise. Please be realistic, and consider what it is that you are an expert in or are knowledgeable about, but also what you might not have sufficient expertise about to peer review an article in. We’re asking you to volunteer to review a reasonable number of papers – for every paper submitted, three to five (sometime more) people will need to review it, and for the conference to work at all, we need a lot of great reviewers (you!) to volunteer their time for multiple papers. A good rule of thumb would be to volunteer to review at least 3 submissions for every submission you make.

If you haven’t reviewed for ACM CHI before, there are many valuable reasons to do so – not least because you get to see how expert reviewers go about assessing papers for when you submit your own papers to the conference. We strongly encourage new reviewers from industry and research students to take part in reviewing; the committee members will balance topic expertise and prior experience to ensure that less experienced researchers will work alongside at least two other highly experienced researchers as reviewers. For those who do have experience of submitting and reviewing for CHI, we will be working with the original version of the submission system, PCS 1, so please ensure that you access the correct site via https://precisionconference.com/~sigchi/.

There are a number of venues at CHI, and while the ‘Papers’ track is the most prominent, other tracks show important and interesting work. If you haven’t reviewed for other venues such as Late Breaking Work,before, please sign up to review for them on PCS. Some of these tracks are not yet open for volunteering, but will become available very soon. You can find all the CHI 2018 tracks and their deadlines on the CHI 2018 website: https://chi2018.acm.org/authors/. We would be grateful if you would sign up for as many of these as you are able to – reviewing deadlines for these are usually different to the Papers track, so you won’t be overwhelmed with too much reviewing all at the same time.

We really appreciate your enthusiasm and effort in creating a great conference for 2018 – many thanks in advance!

Anna Cox and Mark Perry

Technical Programme Chairs, ACM CHI 2018

Extension to submissions – PCS downtime

As you are aware, PCS – the Precision Conference System that CHI papers are submitted to – has been broken today. There have been several hours where it was been either inaccessible or impossible to submit papers for technical reasons. We are also concerned that the site may be unstable in the next few hours. And also maybe later too. We have therefore decided to extend the deadline to

4pm/1600 Pacific Time (San Francisco, Vancouver),

7pm/1900 Eastern Time (New York, Toronto ),

midnight BST (London),

7am/0700 China Standard Time/Singapore Time (Beijing, Singapore),

8am/0800 Japan Standard Time (Tokyo),

9am/0900 Australian Eastern Time (Sydney).

Please don’t submit at the last possible moment if you can avoid this.

Big shout out to Australia/New Zealand/Other times zones where it is bedtime: #nopaperleftbehind – you can submit tomorrow. Sleep well.

Lots of love TPCs & Paper Chairs

Problems with PCS for Papers submission

We are aware of issues with the submission system (PCS) for submissions of materials for Papers. We are actively working on resolving this issue. We will post regular updates on this page, as well as on Twitter, Facebook, Google+, and across all other social media channels.

Update September 19 2017 at 7am PDT, 2pm GMT, 3pm BST

We are extending the submission deadlines for Papers. Please see this blog post about updated times.

Update September 19 2017 at 5am PDT, 12pm (noon) GMT, 1pm BST

PCS is back online. We continue to monitor the situation.

If you are still experiencing issues, please restart your browser or try a different browser.

Update September 19 2017 at 4am PDT, 11am GMT, 12pm (noon) BST,

We are still working on identifying the root cause of this issue.

Next update at 6am PDT, 1pm GMT, 2pm BST, or earlier if we have any updates.

Update September 19 2017 at 9am GMT, 10am BST, 2am PDT

We are aware that PCS is currently unavailable. We are working to find the cause.

Next update at 4am PDT, 11am GMT, 12pm (noon) BST. We will post hourly updates from 9am PDT, 4pm GMT, 5pm BST

Extension to deadline for CHI2018 Metadata

We are trying to be responsive to the emerging situation on the ground for those affected by the hurricane, and of the huge difficulties and efforts that those affected are putting in in order to try to submit today.

As you’re aware from the previous posts, we have very little leeway to move any of the deadlines. For more information about why this is so please see https://chi2018.acm.org/papers-review-process/

However, we want to extend the opportunity for as many people as possible to be able to submit their papers. We are therefore moving today’s deadline to the 14th giving an extra couple of days. This is the one area that we really have any real scope to adjust. So, we now have updated this:

- Submission deadline: 12pm (noon) PDT / 3pm EDT September 14, 2017. Title, abstract, authors, subcommittee choices, and other metadata.

Unfortunately, we have no ability to move the final deadline for submissions. This will remain unchanged:

- Materials upload deadline: 12pm (noon) PDT / 3pm EDT September 19, 2017.

Keep safe!

Ed Cutrell, Anind Dey & m.c. schraefel, CHI 2018 Papers Chairs

Anna Cox & Mark Perry, CHI 2018 Technical Program Chairs

Special note to the CHI community in response to the disruptions caused by Harvey and Irma

For all our friends and colleagues preparing for, and recovering from these catastrophes, our hearts and minds are with you. Watching the news reports and hearing many of the stories coming out of the Caribbean and southern U.S. is truly gut-wrenching. In the past few days, we have received several inquiries about the possibility of a deadline extension for CHI 2018 Papers. Obviously, for those affected by these storms, the preparation of CHI submissions has taken a backseat to much more immediate needs, and we wanted to respond more generally for the whole community.

While we are deeply sympathetic to the disruption these storms have caused, we cannot move the deadline. The CHI papers process is a massive, complex, and somewhat fragile thing—the timing tolerance in going from submissions to reviews to the conference in April is literally on the order of a day or two, and any delays would jeopardize the technical program in Montréal. We have checked to see if there are any creative things we can do, but we are so close to the deadline that there just isn’t any way for us to change the process at this point. We are deeply sorry—we know how important CHI is for students, scholars and professionals in our community and these tragedies hit the whole community.

We hope that all those affected are safe and managing the aftermath with their loved ones. We stand with you and hope to see you all in Montréal next spring.

Ed Cutrell, Anind Dey & m.c. schraefel, CHI 2018 Papers Chairs

Anna Cox & Mark Perry, CHI 2018 Technical Program Chairs

Welcoming Submissions to the Panels & Fireside Chats Venue

At CHI 2018, the theme will be engage. The technical and social programs at CHI will provide several ways for attendees to engage in discussion. One of these is through the curated Panels & Fireside Chats Venue. We are inviting stimulating panel submissions that inspire new ideas, are innovative in approach, and likely to engage the conference attendees.

One change this year is that we are welcoming a range of formats for the presentation of panels. They can take the form of a traditional panel of discussants with a moderator, a fireside chat in which an individual gets interviewed by a moderator, or a roundtable in which the moderator(s) pose the questions to the audience for discussion. Our goal is to facilitate discussion – panels that propose a series of mini presentations are not what we are looking for. We would like you to be creative in proposing a format for your panels and welcome new ideas!

The deadline for submissions to the Panels & Fireside Chats venue is January 15, 2017, but we welcome ideas for high-profile plenary session panels and fireside chats at any time. If you have ideas for a high-profile panel, fireside chat, or roundtable discussion that would be of interest to a broad range of CHI attendees, please contact the venue’s co-chairs (panels@chi2018.acm.org) and/or the conference co-chairs (generalchairs@chi2018.acm.org) with your ideas as soon as possible.

Panels & Fireside Chats Chairs

Parmit Chilana, Simon Fraser University, Canada

Julie Kientz, University of Washington, USA

Conference Chairs

Regan Mandryk, University of Saskatchewan, Canada

Mark Hancock, University of Waterloo, Canada